Hagia Sophia, Conflict, AI

Walking in Istanbul and today’s machine‑mediated conflict

I have been thinking about why conflict is so central to human history, and how AI changes the way conflict works.

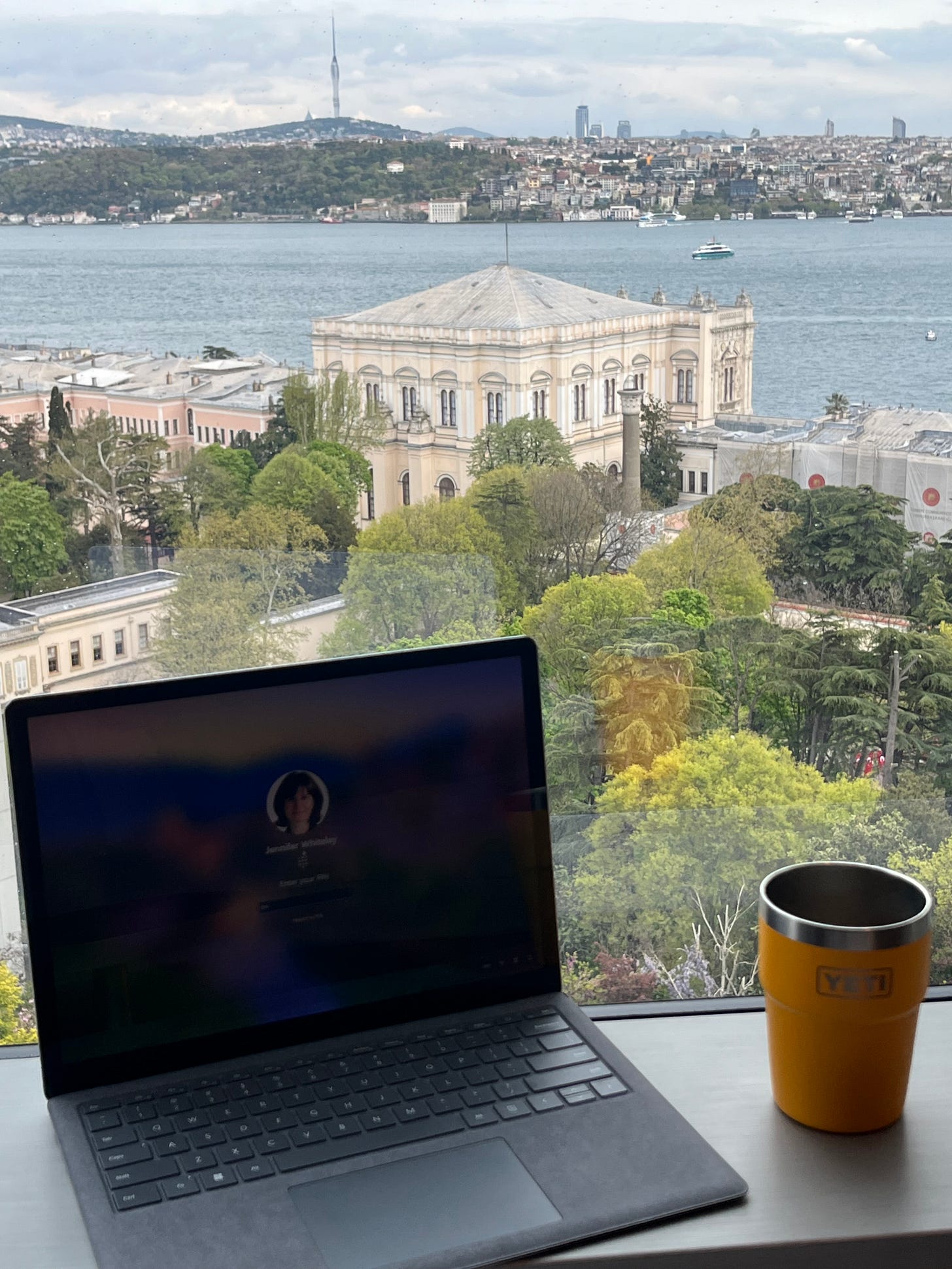

Part of that came from being in Istanbul for the last two weeks. I was here for a NATO event, but I also spent time at Hagia Sophia and the museum, which walks through centuries of conflict, conquest, imperial competition, and political reinvention in this city.

It is hard to sit in NATO discussions about AI, autonomy, interoperability, and decision advantage, then walk through a place shaped by conflict over centuries, and not connect the two.

So let’s think about why conflict is central in the world and how machine intelligence is different and what else is possible, what will shift.

Why conflict Persists

Conflict persists because it is structurally rooted in power, security, status, and bargaining under uncertainty.

Across history, conflict has been one of the main ways borders were drawn, hierarchies reset, empires built and broken, and new rules imposed, so our institutions grew up inside a world where organised violence is treated as an ultimate tool when other options fail. It keeps returning not because we lack ideals or law, but because it has become a load‑bearing mechanism for how humans manage fear, power shifts, and deep uncertainty about each other’s intentions.

What AI changes

AI does not remove these drivers, it changes the medium. It compresses decision cycles, filters and ranks information before humans see it, floods planners with more options than they can realistically review, and shifts contestation into infrastructure and governance around compute, models, data rules, and evaluation. The result is that conflict becomes less legible as a discrete event and more like an emergent property of coupled, machine‑mediated systems.

The near‑term risk

decision support, not killer robots

The major near‑term risk is not fully autonomous weapons acting alone, that comes later. It is decision‑support systems shaping choices, biasing perception, and weakening human judgment.

Human in the loop can remain formally true while being substantively false, because the loop is constrained by machine‑curated perception and compressed time.

Compressed timelines also increase the risk of inadvertent escalation and feedback loops between systems

What this world looks like

What this world looks like is not Mad Max and not Skynet. It looks like today with three big differences

the background level of contest is higher

the tempo of crisis is faster

the real decision often happens before the humans convene

In daily civilian life, most routines continue. People commute, trade, travel, and argue about domestic politics. The change is that the systems underneath normal life are now strategic terrain. Data centers, cloud regions, satellite links, ports, energy grids, and subsea cables are treated as critical national assets because they keep both the economy and the security apparatus coherent. When conflict flares, the first‑order effects are often brownouts, communications problems, supply chain jolts, insurance repricing, and a flood of synthetic media that makes situational awareness harder.

Inside militaries, command posts stop being places where humans primarily construct options. They become spaces where humans manage machine‑curated feeds of ranked alerts, recommended actions, and bundled packages. Staff work becomes curation and exception handling, not plan‑building. Human in the loop thins because the system has already selected inputs, ranked threats, narrowed options, and compressed time for dissent. Overriding the system becomes an institutional risk against something that appears fast and objective.

The conflict environment becomes ambient. There is continual probing and shaping across cyber, influence, and economic domains, plus periodic short sharp crises that end before traditional diplomacy can fully engage. The most dangerous moments are fast interactions between systems that read each other’s moves as threat signals and escalate in reinforcing loops. Some crises will be born in this coupling and only later get framed politically.

Public truth gets thinner. Synthetic media and AI‑generated propaganda become routine in crises. People stop trying to verify everything and instead fall back on trusted channels, tribes, or authenticated institutional feeds. Legitimacy and provenance become core strategic resources. The competitive edge is not only better models, but institutions that can prove what is true, contest machine outputs, and maintain accountability when machine‑shaped decisions cause harm.

What has to shift

Given that conflict is structurally central and AI is moving upstream into perception and framing, the key competition is over institutional capacity, not just capability.

Priorities that follow

Design decision‑support so that judgment remains thick, not ceremonial

Keep prediction and judgment structurally separate in doctrine and system design

Make machine outputs auditable and contestable under pressure

Train for dissent and override as professional competencies, not exceptions

Build friction and circuit breakers into high‑stakes pipelines

Treat stack governance, provenance, and sovereign compute as core elements of security and alliance planning

Conflict stays. AI changes how it is produced and where it shows up.

The foresight question is whether our institutions can still interrupt and redirect conflict in a world where the background contest rarely switches off.