The Future According to NVIDIA

Jensen Huang at GTC 2026 and the Price of Intelligence

On March 16, 2026, Jensen Huang walked onstage at GTC in San Jose and delivered what was, beneath the chip announcements and robot demos, an economic thesis. A theory of value.

Intelligence is now manufactured. It is produced in factories, priced by grade, and sold as a commodity. The unit of production is the token, the atomic output of an AI system thinking, reasoning, or acting. And the entire economy is starting to re-organize around who produces tokens, who consumes them, and who captures margin in between.

Let’s examine Huang’s view, and what it could mean for enterprise strategy.

Your Data Centre Is Now a Factory

Data centres are factories. They used to store files. Now they manufacture tokens, the outputs of AI inference. Inference is the live work of a trained model turning inputs into outputs. Every question answered, every document summarized, every line of code generated, every agent reasoning through a problem produces tokens. Tokens are the product.

Think of it this way, when a coding agent writes software, a chatbot answers a customer, or a reasoning model analyzes a document, the organization is effectively buying tokenized intelligence as an operational output.

And like any factory, these facilities are permanently power-constrained. A one-gigawatt data centre will never become two gigawatts. It is physically, atomically limited. So the economics become - how many tokens can you produce per watt of power, and at what quality?

These two goals are in tension. You can optimize for volume or for intelligence, but not both from the same hardware.

This is where it gets interesting for non-NVIDIA companies: Huang showed that tokens will be priced in tiers, just like any commodity market. A free tier (high volume, small models) for user acquisition. A standard tier at roughly $3–6 per million tokens for general business. A premium tier at ~$45/M for frontier reasoning. And an ultra tier at ~$150/M for extended research and critical-path engineering.

Each new hardware generation doesn’t just cut costs, it unlocks tiers that didn’t previously exist. The premium tier wasn’t viable on Hopper. It became viable on Blackwell. Vera Rubin opens ultra. The infrastructure you deploy this year determines which revenue tiers you can access next year.

The takeaway: Even if you will never own an AI factory, the token price curve set by these factories determines the cost of the intelligence inside your products. Track it the way you track interest rates.

Structured Data Is the Ground Truth and It’s Not Ready

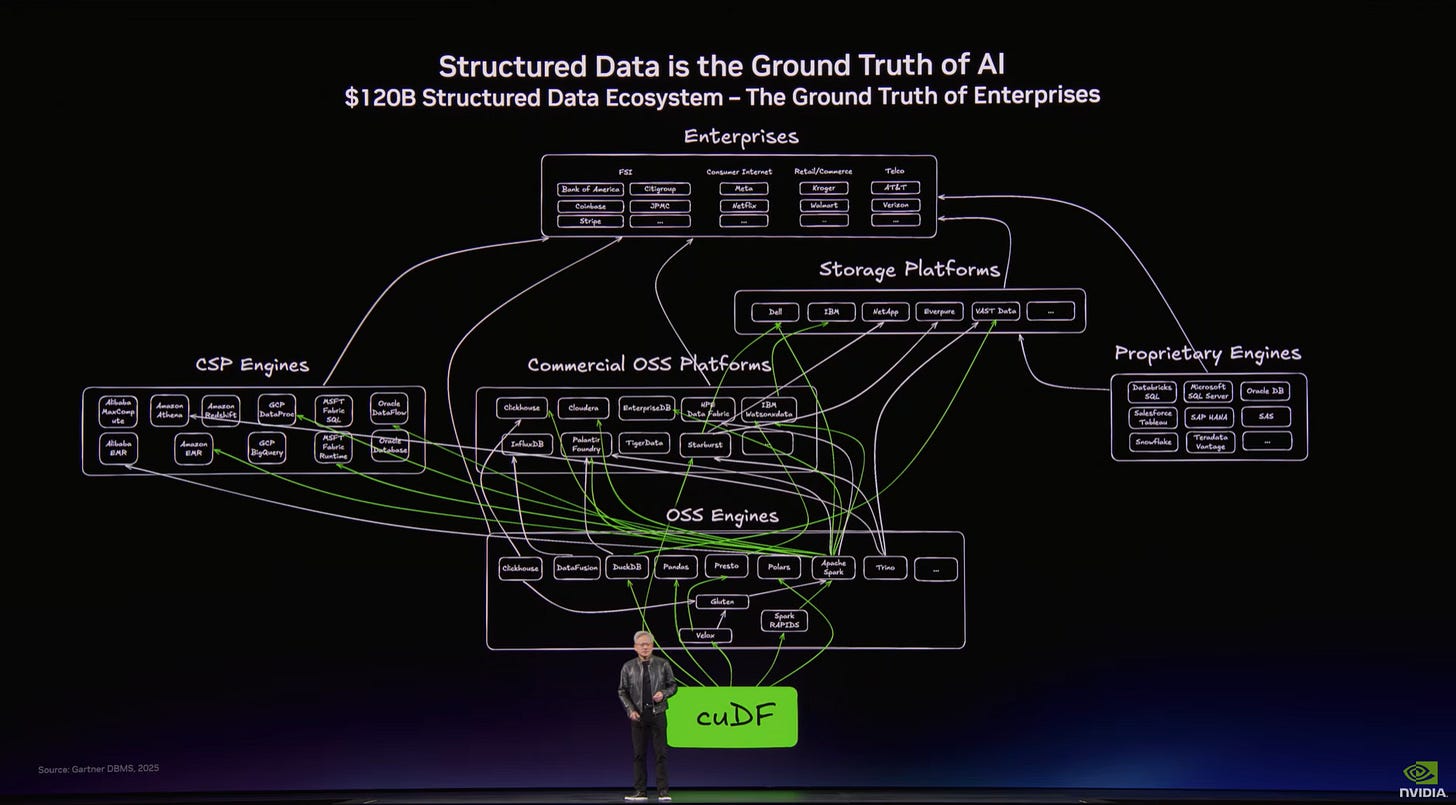

Huang showed what he called his favourite slide, and told the audience not to gasp:

The $120 billion structured data ecosystem — every line connecting enterprises to the engines that process their SQL, Spark, and data frames. Huang’s point: this is the ground truth of business, and it was built for humans querying at human speed. Agents will query at machine speed. It needs to be rebuilt.

This is the entire plumbing of enterprise computing. Every SQL query, every Spark job, every data frame flowing from your ERP to your dashboards. Enterprises across financial services, retail, telecom, and consumer internet, all connected through a web of storage platforms, processing engines, and open-source tools.

Huang’s argument: this infrastructure is the ground truth of your business. It holds every transaction, every supply chain event, every customer record. AI must be grounded in this truth to be trustworthy. Structured data is what makes AI controllable and reliable.

But here’s the problem: all of this was built for humans querying at human speed. In the agentic future, AI agents won’t wait for your nightly batch job. They’ll hammer your databases thousands of times per second. Your data infrastructure becomes the bottleneck, not the AI model.

NVIDIA’s answer is GPU-accelerated data processing.

The takeaway: Your AI strategy will fail at the data layer if your structured data systems can’t serve agents at machine speed. This isn’t a future problem. It’s a now problem.

OpenClaw and Why Every Company Now Needs an Agentic Strategy

Huang spent a significant amount of time on OpenClaw, an open-source agentic framework that, he claimed, surpassed Linux’s 30-year adoption curve in weeks. He compared it to Windows, Linux, HTTP, and Kubernetes: a platform shift that every company must have a strategy for.

OpenClaw is an operating system for AI agents. It manages resources (tools, file systems, LLMs), schedules tasks, decomposes prompts into multi-step plans, spawns sub-agents, and handles multi-modal I/O.

The enterprise implication was that every SaaS company will become an agentic-as-a-service company. The $2 trillion enterprise IT industry - built on tools, file systems, and consultants helping humans use tools is being restructured into a multi-trillion dollar industry offering specialized AI agents that companies rent.

An agentic-as-a-service company delivers specialized AI agents that perform work on behalf of the enterprise, rather than simply providing tools for humans to operate.

But agents in the corporate network can access sensitive information, execute code, and communicate externally. That’s a security nightmare. NVIDIA’s response is NemoClaw, an enterprise-hardened reference stack with guardrails, privacy routing, and policy engine integration.

The takeaway: “What is your OpenClaw strategy?” is now the same kind of question as “What is your cloud strategy?” was in 2012. If you’re a SaaS company, start planning your agentic-as-a-service offering. If you’re an enterprise, start planning your agent governance framework.

Where Does Your Firm Sit in the New Stack?

This is the question that matters. Not every company produces tokens. Not every company builds models. The GTC keynote implies a value stack that is forming fast:

Some companies produce tokens — hyperscalers and AI-native clouds competing on tokens-per-watt.

Some build the models — OpenAI, Anthropic, Mistral, and now NVIDIA itself with Nemotron, competing on reasoning quality.

Some build orchestration layers — the Open Claw ecosystem, LangChain, enterprise platforms going agentic. This is where workflow logic and governance live.

Some win by owning domain data and expertise — this is where most established enterprises will create value. Fusing AI with proprietary structured data, regulatory knowledge, and vertical workflows that pure AI companies can’t replicate.

Some own distribution — Uber doesn’t build the autonomous driving AI, but it owns the network where robotaxis deploy.

Some win in regulated or sovereign environments — deploying AI where cloud-native competitors can’t go. Huang highlighted confidential computing, air-gapped deployments with Palantir and Dell, and sovereign AI partnerships.

You need to know which of these positions you occupy, which are defensible, and which are commoditizing beneath you. That is now a board-level question.

What Huang Says About Hiring

Huang said that engineers at NVIDIA, and increasingly across Silicon Valley, are being given annual token budgets as part of their compensation. An engineer making a few hundred thousand in base salary might receive half again as much in token allocation, because access to AI compute makes them an estimated 10× more productive.

“How many tokens come with my job?” is already a recruiting question.

Think about what that means for your talent strategy. The productivity gap between a team with generous AI compute access and one without is not incremental, it is an order of magnitude. The cost of tokens is marginal. The cost of not providing them is losing your best people to firms that do.

The Bottom Line

Jensen Huang gave a two-hour keynote about chips, racks, and robots. But the actual message was about economics. Intelligence is now manufactured at industrial scale. It is priced by grade. It flows through an emerging value chain from silicon to agents. And every company, whether it makes semiconductors or sells insurance, needs to understand where it sits in that chain.

The future according to NVIDIA is a future where everyone becomes a participant in a token economy, and the winners are the ones who figured out their position early.

That conversation should be happening in your boardroom this quarter.

Derived from Jensen Huang’s GTC 2026 keynote, March 16, 2026, San Jose, California.